You've got it

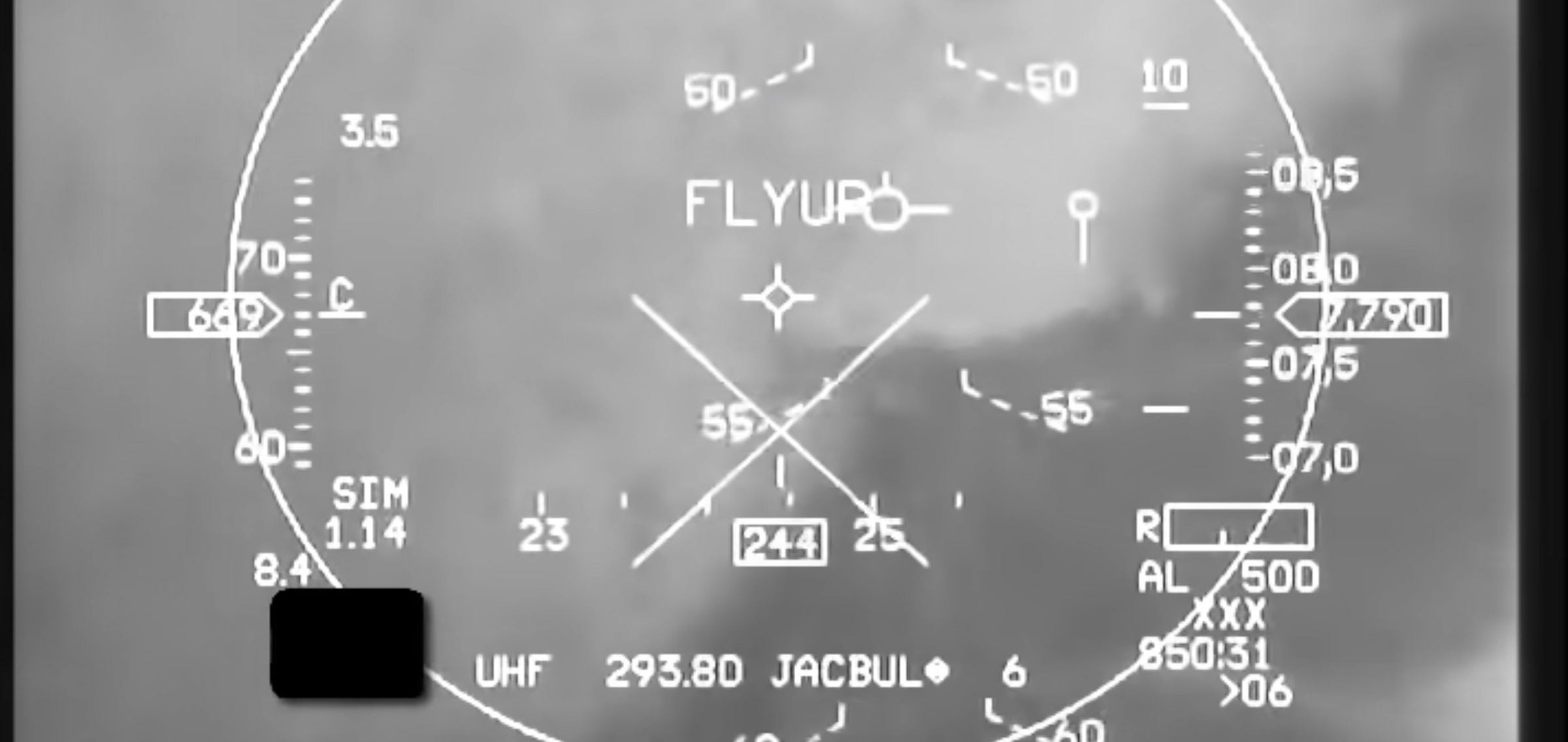

Below is a video of Auto-GCAS being tested on an F-16 fighter jet. It takes over when a crash is imminent – normally because G-forces have caused the pilot to black out and the plane is headed for the ground. It pulls the plane out of the collision course and then disconnects, handing back control to the pilot. In the video, after the plane levels out, you can hear a beep, and then Bitchin’ Betty announces “you’ve got it”, meaning control has been handed back to the pilot.

I love the idea of machines stepping in to save the day. Whether it’s Auto-GCAS, TCAS (which prevents airliners from colliding mid-air, and whose instructions pilots must obey even when overridden by air traffic control), automatic braking on cars, implantable defibrillators watching for abnormal cardiac rhythms, or even just type checking when writing code, there’s something romantic about the eternal vigilance of machines.

It’s also a much easier automation problem to solve than having the machine do literally everything.

Instead of level 2/3 self-driving cars, where drivers have to step in at short notice if the self-driving system gets into trouble, we should have systems that are usually off but step in to prevent accidents that humans can’t avoid.

This is a strictly simpler engineering problem: you leave the driving and navigating largely to humans, with the computer optimised to detect collisions, loss of control, sleepy drivers and other mistakes that humans make.

Similar issues occur in modern airliners, which have autopilot systems so sophisticated they can take off, navigate and land automatically (after being configured by the pilots). But these systems can disconnect suddenly, causing pilots to have to step in at short notice. Frequently the crew will be disoriented and situationally blind after hours of letting the autopilot do the work, leading them to make simple but deadly mistakes.

In the world of agentic AI, the handing off of human processes to machines is become all the more relevant. Increasingly humans will be fully out of the loop. Claude Code’s ‘auto mode’ lets Claude issue commands, and has a second classified check those commands for safety – no human intervention required.

As a Claude Code enjoyer I’ve naturally been leaning on this mode a lot. It’s not an entirely unusual workflow; plenty of developers will write code with Claude and then have an OpenAI model review it. But I worry that having one Claude model analyse the behaviour of another is tempting fate. Taking two systems with overlapping training and perhaps correlated blindspots, and getting one to monitor the other, feels like tempting fate.

Self-monitoring systems typically have an embedded asymmetry. Terrain-following radar isn’t a worse version of a pilot – it’s a different system which specifically monitors terrain and can adjust the plane’s attitude when it’s going to hit it. An automatic defibrillator isn’t a worse version of a cardiologist – it’s a non-cardiologist watching for a very specific signal. Two Claudes, one reviewing the work of another, don’t have this kind of asymmetry – they’re the same kind of mind reviewing the same work, with the same idea of what ‘good’ looks like.

In the case one identical language model being used to monitor the outputs of another, the redundancy is largely architectural. And nobody says you’ve got it because there’s no-one to hand it back to.